为了更快地定位、解决问题,麻烦参考下面模版提问 ^ ^

提问参考模版:

- nebula 版本:1.1.0

- 部署方式(分布式 / 单机 / Docker / DBaaS):Docker

- 硬件信息

- 磁盘( 必须为 SSD ,不支持 HDD)

- CPU、内存信息:

- 出问题的 Space 的创建方式:执行

describe space xxx; - 问题的具体描述

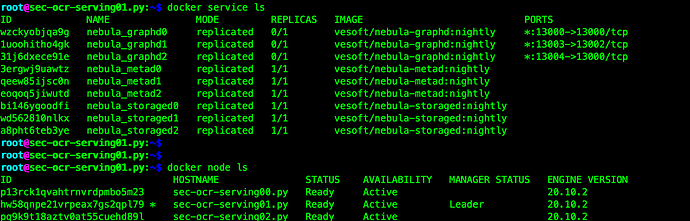

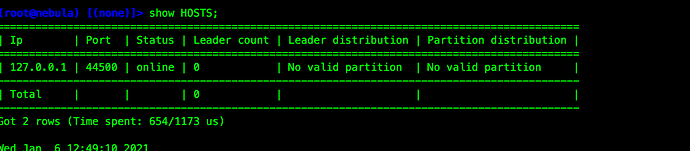

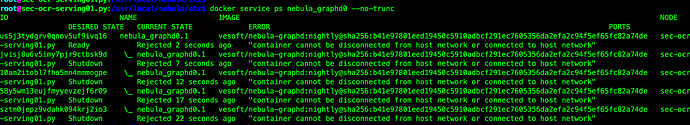

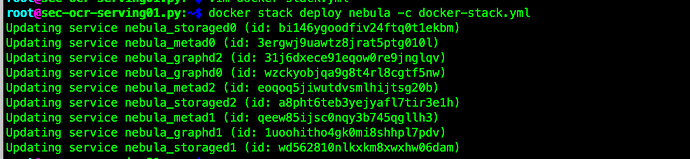

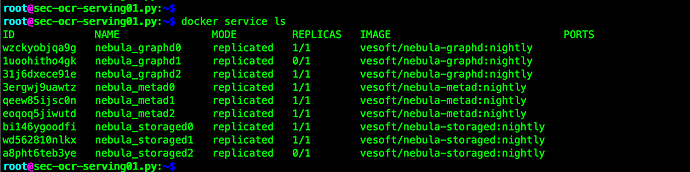

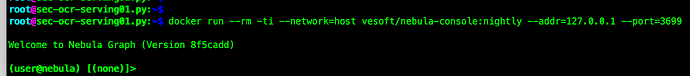

按照文档docker 集群部署完成:

问题:

1 ,如何启动服务

看到有两种启动

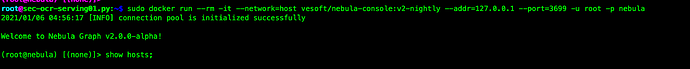

1)sudo /usr/local/nebula/bin/nebula -u -p [–addr= --port=]

2)sudo /usr/local/nebula/bin/nebula -u -p [–addr= --port=]

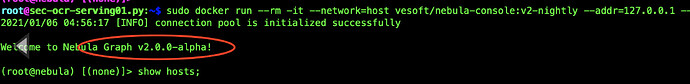

使用第一种启动只有一个节点

第二种卡死了

2.部署 Studio 需要集群部署吗 还是在master上部署就行,如何配置连接集群?

附docker-stack.yml:

version: '3.6'

services:

metad0:

image: vesoft/nebula-metad:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --local_ip=10.86.87.16

- --ws_ip=10.86.87.16

- --port=45500

- --data_path=/data/meta

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving01.py

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.16:11000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 11000

published: 11000

protocol: tcp

mode: host

- target: 11002

published: 11002

protocol: tcp

mode: host

- target: 45500

published: 45500

protocol: tcp

mode: host

volumes:

- data-metad0:/data/meta

- logs-metad0:/logs

networks:

- nebula-net

metad1:

image: vesoft/nebula-metad:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --local_ip=10.86.87.15

- --ws_ip=10.86.87.15

- --port=45500

- --data_path=/data/meta

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving00.py

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.15:11000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 11000

published: 11000

protocol: tcp

mode: host

- target: 11002

published: 11002

protocol: tcp

mode: host

- target: 45500

published: 45500

protocol: tcp

mode: host

volumes:

- data-metad1:/data/meta

- logs-metad1:/logs

networks:

- nebula-net

metad2:

image: vesoft/nebula-metad:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --local_ip=10.86.87.17

- --ws_ip=10.86.87.17

- --port=45500

- --data_path=/data/meta

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving02.py

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.17:11000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 11000

published: 11000

protocol: tcp

mode: host

- target: 11002

published: 11002

protocol: tcp

mode: host

- target: 45500

published: 45500

protocol: tcp

mode: host

volumes:

- data-metad2:/data/meta

- logs-metad2:/logs

networks:

- nebula-net

storaged0:

image: vesoft/nebula-storaged:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --local_ip=10.86.87.16

- --ws_ip=10.86.87.16

- --port=44500

- --data_path=/data/storage

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving01.py

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.16:12000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 12000

published: 12000

protocol: tcp

mode: host

- target: 12002

published: 12002

protocol: tcp

mode: host

volumes:

- data-storaged0:/data/storage

- logs-storaged0:/logs

networks:

- nebula-net

storaged1:

image: vesoft/nebula-storaged:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --local_ip=10.86.87.15

- --ws_ip=10.86.87.15

- --port=44500

- --data_path=/data/storage

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving00.py

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.15:12000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 12000

published: 12000

protocol: tcp

mode: host

- target: 12002

published: 12004

protocol: tcp

mode: host

volumes:

- data-storaged1:/data/storage

- logs-storaged1:/logs

networks:

- nebula-net

storaged2:

image: vesoft/nebula-storaged:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --local_ip=10.86.87.17

- --ws_ip=10.86.87.17

- --port=44500

- --data_path=/data/storage

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving02.py

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.17:12000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 12000

published: 12000

protocol: tcp

mode: host

- target: 12002

published: 12006

protocol: tcp

mode: host

volumes:

- data-storaged2:/data/storage

- logs-storaged2:/logs

networks:

- nebula-net

graphd0:

image: vesoft/nebula-graphd:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --port=3699

- --ws_ip=10.86.87.16

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving01.py

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.16:13000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 3699

published: 3699

protocol: tcp

mode: host

- target: 13000

published: 13000

protocol: tcp

# mode: host

- target: 13002

published: 13002

protocol: tcp

mode: host

volumes:

- logs-graphd:/logs

networks:

- nebula-net

graphd1:

image: vesoft/nebula-graphd:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --port=3699

- --ws_ip=10.86.87.15

- --log_dir=/logs

- --v=2

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving00.py

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.15:13001/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 3699

published: 3640

protocol: tcp

mode: host

- target: 13000

published: 13001

protocol: tcp

mode: host

- target: 13002

published: 13003

protocol: tcp

# mode: host

volumes:

- logs-graphd2:/logs

networks:

- nebula-net

graphd2:

image: vesoft/nebula-graphd:nightly

env_file:

- ./nebula.env

command:

- --meta_server_addrs=10.86.87.15:45500,10.86.87.16:45500,10.86.87.17:45500

- --port=3699

- --ws_ip=10.86.87.17

- --log_dir=/logs

- --v=0

- --minloglevel=2

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == sec-ocr-serving02.py

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://10.86.87.17:13002/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

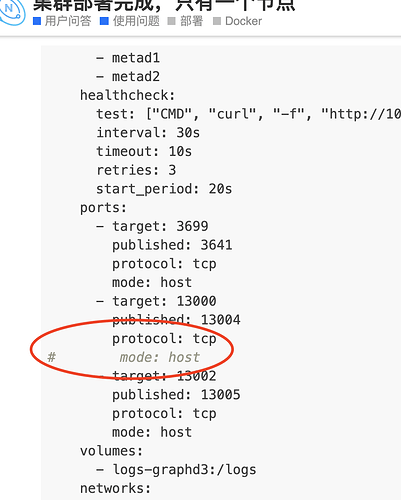

ports:

- target: 3699

published: 3641

protocol: tcp

mode: host

- target: 13000

published: 13004

protocol: tcp

# mode: host

- target: 13002

published: 13005

protocol: tcp

mode: host

volumes:

- logs-graphd3:/logs

networks:

- nebula-net

networks:

nebula-net:

external: true

attachable: true

name: host

volumes:

data-metad0:

logs-metad0:

data-metad1:

logs-metad1:

data-metad2:

logs-metad2:

data-storaged0:

logs-storaged0:

data-storaged1:

logs-storaged1:

data-storaged2:

logs-storaged2:

logs-graphd:

logs-graphd2:

logs-graphd3: