句柄应该不是那样算的, sst除了被直接打开算, 或者别socket, tcp等等连接也算, 一个文件打开多次算多次

这个配置, 现在还需要compact吗? 那我就开始做compact了???

就算考虑多个compaction线程 也开的太多了。建议做下compaction

发给跟你联系的客服小姐姐就行 没找到论坛私信

不知道跟docker有没有关系 物理机就没遇到过这种情况 不过你可以看看sst大小 如果有256M的了 反复重试? 或者去rocksdb提个issue问下。

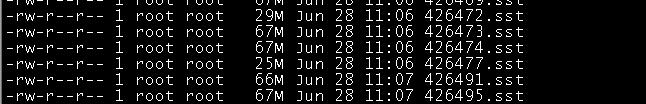

问题一: 为啥没有改变大小呢??? 是不是不是这2个参数, 还有这个.sst文件大小对数据查询和写入有啥影响吗?

问题二: 自动compact打开之后,还需不需要做全量的compact, 之前导入89亿数据最后报错的当时就是关了自动compact导入的, 这次的打开自动compact导入的, 导入了170亿数据到现在还是正常的

大概率配置没设置上,不知道你用的哪个版本,不行就把local_config打开,rocksdb的LOG里也能看到

版本: Nebula V2.0.0-GA,

问题一: 我现在是在客户端中修改配置的, 你的意思是local_config=true, 然后在配置文件中修改, 然后重启??? , 现在已经是64m的.sst文件了, 此时修改配置假如成功的话, 是可以自动把之前的64合并的??

问题二: 还有, local_config配置是只取本地配置 还是互补本地和meta的???

我的yaml文件中的数据卷映射

问题三: 还有, 重新的环境, 导入现在有200亿数据了, 这个句柄的话其中一台, 这个你们没有看多吗? 以你们经验正常吗?

local_config只取本地的

fd数量大的有点夸张,所有sst数量加起来都没有那么多,我这找了环境看了下,也是64M,总数据量75G,lsof -p pid也就5000.

我的yaml文件如下:

version: '3.6'

services:

metad0:

container_name: nebula-mate0

image: vesoft/nebula-metad:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --local_ip=代替IP

- --ws_ip=代替IP

- --port=45500

- --ws_http_port=11000

- --data_path=/data/meta

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00004

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:11000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 11000

published: 11000

protocol: tcp

mode: host

- target: 11002

published: 11002

protocol: tcp

mode: host

- target: 45500

published: 45500

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/data/meta0:/data/meta

- /data01/nebula-deploy3/logs/meta0:/logs

- /data01/nebula-deploy3/etc/meta0:/usr/local/nebula/etc

networks:

- nebula-net

metad1:

container_name: nebula-mate1

image: vesoft/nebula-metad:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --local_ip=代替IP

- --ws_ip=代替IP

- --port=45500

- --ws_http_port=11000

- --data_path=/data/meta

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00005

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:11000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 11000

published: 11000

protocol: tcp

mode: host

- target: 11002

published: 11002

protocol: tcp

mode: host

- target: 45500

published: 45500

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/data/meta1:/data/meta

- /data01/nebula-deploy3/logs/meta1:/logs

- /data01/nebula-deploy3/etc/meta1:/usr/local/nebula/etc

networks:

- nebula-net

metad2:

container_name: nebula-mate2

image: vesoft/nebula-metad:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --local_ip=代替IP

- --ws_ip=代替IP

- --port=45500

- --ws_http_port=11000

- --data_path=/data/meta

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00006

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:11000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 11000

published: 11000

protocol: tcp

mode: host

- target: 11002

published: 11002

protocol: tcp

mode: host

- target: 45500

published: 45500

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/data/meta2:/data/meta

- /data01/nebula-deploy3/logs/meta2:/logs

- /data01/nebula-deploy3/etc/meta2:/usr/local/nebula/etc

networks:

- nebula-net

storaged0:

container_name: nebula-storaged0

image: vesoft/nebula-storaged:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --local_ip=代替IP

- --ws_ip=代替IP

- --port=44500

- --ws_http_port=12000

- --data_path=/data/storage

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

- --auto_remove_invalid_space=true

- --enable_partitioned_index_filter=true

- --local-config=true

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00004

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:12000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 12000

published: 12000

protocol: tcp

mode: host

- target: 12002

published: 12002

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/data/storaged0:/data/storage

- /data01/nebula-deploy3/logs/storaged0:/logs

- /data01/nebula-deploy3/etc/storaged0:/usr/local/nebula/etc

networks:

- nebula-net

storaged1:

container_name: nebula-storaged1

image: vesoft/nebula-storaged:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --local_ip=代替IP

- --ws_ip=代替IP

- --port=44500

- --ws_http_port=12000

- --data_path=/data/storage

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

- --auto_remove_invalid_space=true

- --enable_partitioned_index_filter=true

- --local-config=true

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00005

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:12000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 12000

published: 12000

protocol: tcp

mode: host

- target: 12002

published: 12004

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/data/storaged1:/data/storage

- /data01/nebula-deploy3/logs/storaged1:/logs

- /data01/nebula-deploy3/etc/storaged1:/usr/local/nebula/etc

networks:

- nebula-net

storaged2:

container_name: nebula-storaged2

image: vesoft/nebula-storaged:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --local_ip=代替IP

- --ws_ip=代替IP

- --port=44500

- --ws_http_port=12000

- --data_path=/data/storage

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

- --auto_remove_invalid_space=true

- --local-config=true

- --enable_partitioned_index_filter=true

- --local-config=true

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00006

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:12000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 12000

published: 12000

protocol: tcp

mode: host

- target: 12002

published: 12006

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/data/storaged2:/data/storage

- /data01/nebula-deploy3/logs/storaged2:/logs

- /data01/nebula-deploy3/etc/storaged2:/usr/local/nebula/etc

networks:

- nebula-net

graphd0:

container_name: nebula-graphd0

image: vesoft/nebula-graphd:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --port=3699

- --ws_http_port=13000

- --ws_ip=代替IP

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00004

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:13000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 3699

published: 3699

protocol: tcp

mode: host

- target: 13000

published: 13000

protocol: tcp

mode: host

- target: 13002

published: 13002

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/logs/graphd0:/logs

- /data01/nebula-deploy3/etc/graphd0:/usr/local/nebula/etc

networks:

- nebula-net

graphd1:

container_name: nebula-graphd1

image: vesoft/nebula-graphd:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --port=3699

- --ws_http_port=13000

- --ws_ip=代替IP

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00005

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:13000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 3699

published: 3699

protocol: tcp

mode: host

- target: 13000

published: 13000

protocol: tcp

mode: host

- target: 13002

published: 13002

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/logs/graphd1:/logs

- /data01/nebula-deploy3/etc/graphd1:/usr/local/nebula/etc

networks:

- nebula-net

graphd2:

container_name: nebula-graphd2

image: vesoft/nebula-graphd:v2.0.0

environment:

USER: root

TZ: Asia/Shanghai

command:

- --meta_server_addrs=代替IP:45500,代替IP:45500,代替IP:45500

- --port=3699

- --ws_http_port=13000

- --ws_ip=代替IP

- --log_dir=/logs

- --v=0

- --minloglevel=0

- --timezone_name=CST

deploy:

replicas: 1

restart_policy:

condition: on-failure

placement:

constraints:

- node.hostname == dggbplinkx00006

depends_on:

- metad0

- metad1

- metad2

healthcheck:

test: ["CMD", "curl", "-f", "http://代替IP:13000/status"]

interval: 30s

timeout: 10s

retries: 3

start_period: 20s

ports:

- target: 3699

published: 3699

protocol: tcp

mode: host

- target: 13000

published: 13000

protocol: tcp

mode: host

- target: 13002

published: 13002

protocol: tcp

mode: host

volumes:

- /data01/nebula-deploy3/logs/graphd2:/logs

- /data01/nebula-deploy3/etc/graphd2:/usr/local/nebula/etc

networks:

- nebula-net

networks:

nebula-net:

external: true

attachable: true

name: host