Nebula 版本: 2.0.1

使用的是编译部署的,编译成标准安装包

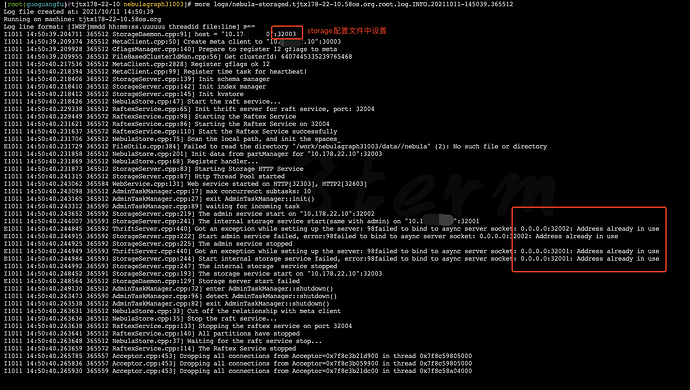

单机部署多实例,新部署节点storage节点端口为31003,节点启动失败,总是报98failed to bind to async server socket: 0.0.0.0:32001: Address already in use,完整报错如下:

more logs/nebula-storaged.tjtx178-22-10.58os.org.root.log.WARNING.20211011-145040.365512

Log file created at: 2021/10/11 14:50:40

Running on machine: tjtx178-22-10.58os.org

Log line format: [IWEF]mmdd hh:mm:ss.uuuuuu threadid file:line] msg

E1011 14:50:40.231729 365512 FileUtils.cpp:384] Failed to read the directory “/work/nebulagraph31003/data//nebula” (2): No such file or directory

E1011 14:50:40.244845 365592 ThriftServer.cpp:440] Got an exception while setting up the server: 98failed to bind to async server socket: 0.0.0.0:32002: Address already in use

E1011 14:50:40.244915 365592 StorageServer.cpp:222] Start admin service failed, error:98failed to bind to async server socket: 0.0.0.0:32002: Address already in use

E1011 14:50:40.244949 365593 ThriftServer.cpp:440] Got an exception while setting up the server: 98failed to bind to async server socket: 0.0.0.0:32001: Address already in use

E1011 14:50:40.244984 365593 StorageServer.cpp:244] Start internal storage service failed, error:98failed to bind to async server socket: 0.0.0.0:32001: Address already in use

E1011 14:50:40.248564 365512 StorageDaemon.cpp:129] Storage server start failed

info日志截图如下:

上头红框是配置文件中配置,日志中一直找32001和32002

附31003 storage节点配置文件:

cat /work/nebulagraph31003/etc/nebula-storaged.conf

########## basics ##########

--daemonize=true

--pid_file=/work/nebulagraph31003/pids/nebula-storaged.pid

########## logging ##########

--log_dir=/work/nebulagraph31003/logs

--minloglevel=0

--v=0

--logbufsecs=0

--redirect_stdout=true

--stdout_log_file=storaged-stdout.log

--stderr_log_file=storaged-stderr.log

--stderrthreshold=2

########## networking ##########

--meta_server_addrs=ip1:30003,10.178.22.10:ip2,10.178.23.219:ip2

--local_ip=ip1

--port=32003

--ws_ip=0.0.0.0

--ws_http_port=32303

--ws_h2_port=32603

--heartbeat_interval_secs=10

######### Raft #########

--raft_heartbeat_interval_secs=30

--raft_rpc_timeout_ms=500

--wal_ttl=14400

########## Disk ##########

--data_path=/work/nebulagraph31003/data/

--rocksdb_batch_size=4096

--rocksdb_block_cache=4096

--rocksdb_compression=lz4

--rocksdb_compression_per_level=

############## rocksdb Options ##############

--rocksdb_db_options={"max_subcompactions":"4","max_background_jobs":"4"}

--rocksdb_column_family_options={"disable_auto_compactions":"false","write_buffer_size":"67108864","max_write_buffer_number":"4","max_bytes_for_level_base":"268435456"}

--rocksdb_block_based_table_options={"block_size":"8192"}

--enable_rocksdb_statistics=false

--rocksdb_stats_level=kExceptHistogramOrTimers

--enable_rocksdb_prefix_filtering=false

--enable_rocksdb_whole_key_filtering=true

--rocksdb_filtering_prefix_length=12

############### misc ####################

--max_handlers_per_req=1