- nebula 版本:2.6.2

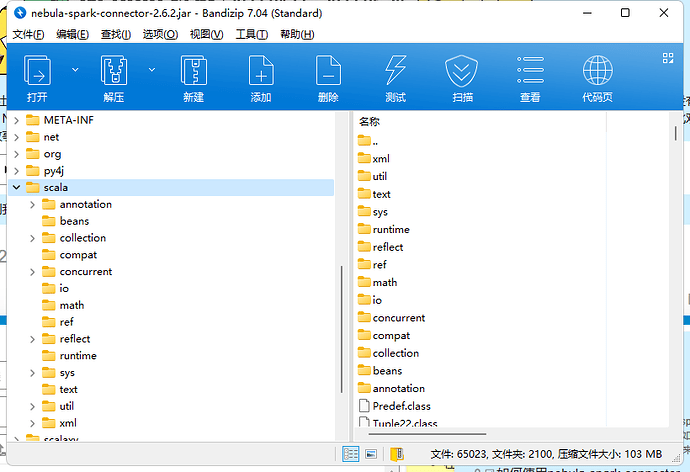

使用spark工程,我在工程中通过maven引入spark-connector,通过以下方式引用,但是我无论怎么在package的时候,包内永远存在一个scala的文件夹,这导致我上传到spark集群中scala冲突,我该如何去掉它

<dependency>

<groupId>com.vesoft</groupId>

<artifactId>nebula-spark-connector</artifactId>

<version>2.6.2</version>

<exclusions>

<exclusion>

<artifactId>spark-graphx_2.11</artifactId>

<groupId>org.apache.spark</groupId>

</exclusion>

<exclusion>

<artifactId>scala-reflect</artifactId>

<groupId>org.scala-lang</groupId>

</exclusion>

<exclusion>

<artifactId>scalatest-core_2.11</artifactId>

<groupId>org.scalatest</groupId>

</exclusion>

<exclusion>

<artifactId>scala-library</artifactId>

<groupId>org.scala-lang</groupId>

</exclusion>

<exclusion>

<artifactId>spark-core_2.11</artifactId>

<groupId>org.apache.spark</groupId>

</exclusion>

<exclusion>

<artifactId>spark-sql_2.11</artifactId>

<groupId>org.apache.spark</groupId>

</exclusion>

<exclusion>

<artifactId>scalatest-funsuite_2.11</artifactId>

<groupId>org.scalatest</groupId>

</exclusion>

</exclusions>

</dependency>